Hugo Proença, Senior Member, IEEE

Abstract: The concept of periocular biometrics has been gaining relevance, in particular to improve the robustness of iris recognition to degraded data. This paper proposes an atomistic periocular recognition algorithm, in the sense that describes a recognition ensemble made of two disparate components, with radically different properties: the best expert analyses the iris texture and exhaustively exploits the multi-spectral information in visible-light data; complementary, another expert parameterises the shape of eyelids and defines a surrounding dimensionless region-of-interest, from where statistics of the eyelids, eyelashes and skin wrinkles / furrows are encoded. Both experts work on disjoint data and use very different encoding / matching strategies, meeting three important properties: 1) experts produce practically independent responses, which is behind the better performance of the ensemble when compared to the best individual recogniser; 2) experts are not particularly sensitive to the same image covariate, which accounts for augmenting the robustness against degraded data; and 3) experts disregard information in the periocular region that can be easily forged (e.g., shape of eyebrows), which can the regarded as an active anti-counterfeit measure. An empirical evaluation was conducted on two public data sets (FRGC and UBIRIS.v2), and points for consistent improvements in performance of the proposed ensemble over the state-of-the-art periocular recognition algorithms.

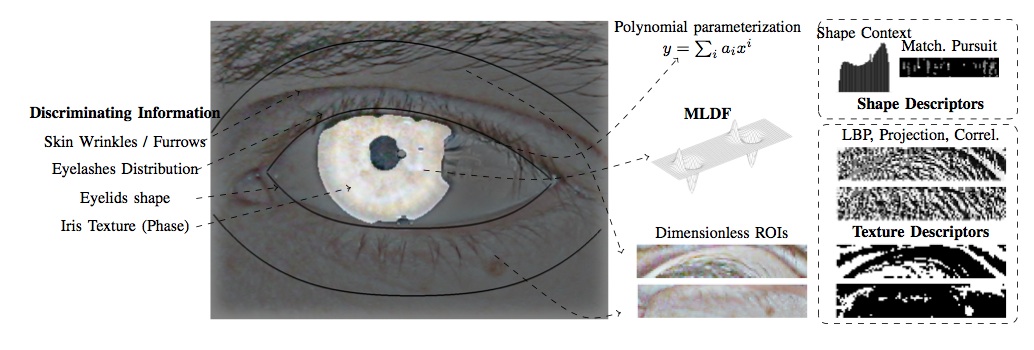

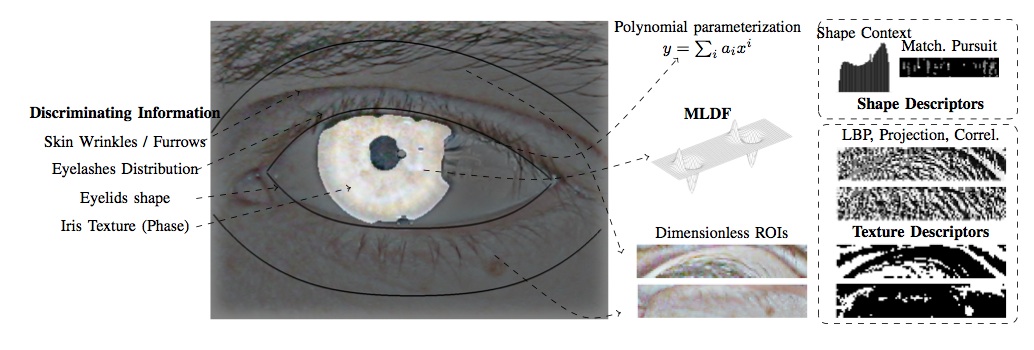

Fig. 1: Cohesive overview of the ensemble recognition method proposed in this paper: a strong biometric expert encodes the information inside the iris by multi-lobe differential filters. The weak expert is based in the polynomial parameterisation of the shape of the visible cornea, from where two dimensionless regions-of-interest are defined. Shape and texture descriptors encode the discriminating information.

Datasets

Source Code

Abstract: The concept of periocular biometrics has been gaining relevance, in particular to improve the robustness of iris recognition to degraded data. This paper proposes an atomistic periocular recognition algorithm, in the sense that describes a recognition ensemble made of two disparate components, with radically different properties: the best expert analyses the iris texture and exhaustively exploits the multi-spectral information in visible-light data; complementary, another expert parameterises the shape of eyelids and defines a surrounding dimensionless region-of-interest, from where statistics of the eyelids, eyelashes and skin wrinkles / furrows are encoded. Both experts work on disjoint data and use very different encoding / matching strategies, meeting three important properties: 1) experts produce practically independent responses, which is behind the better performance of the ensemble when compared to the best individual recogniser; 2) experts are not particularly sensitive to the same image covariate, which accounts for augmenting the robustness against degraded data; and 3) experts disregard information in the periocular region that can be easily forged (e.g., shape of eyebrows), which can the regarded as an active anti-counterfeit measure. An empirical evaluation was conducted on two public data sets (FRGC and UBIRIS.v2), and points for consistent improvements in performance of the proposed ensemble over the state-of-the-art periocular recognition algorithms.

Fig. 1: Cohesive overview of the ensemble recognition method proposed in this paper: a strong biometric expert encodes the information inside the iris by multi-lobe differential filters. The weak expert is based in the polynomial parameterisation of the shape of the visible cornea, from where two dimensionless regions-of-interest are defined. Shape and texture descriptors encode the discriminating information.

Datasets

- Comps_UBIRIS.txt: List of images and corresponding pairwise comparisons from the UBIRIS.v2 data set.

- Comps_FRGC.txt: List of images and corresponding pairwise comparisons from the FRGC data set.

Source Code

- FusionOcular.zip: (Available soon) Zip file with

the ".m" source files implemented in the scope of this work. Note that

some functions require third-party packages that you might need to

download.